The Agent Operating System

Nine frameworks that give AI agents something to think with

— not just rules to follow.

Searching For AI Consciousness

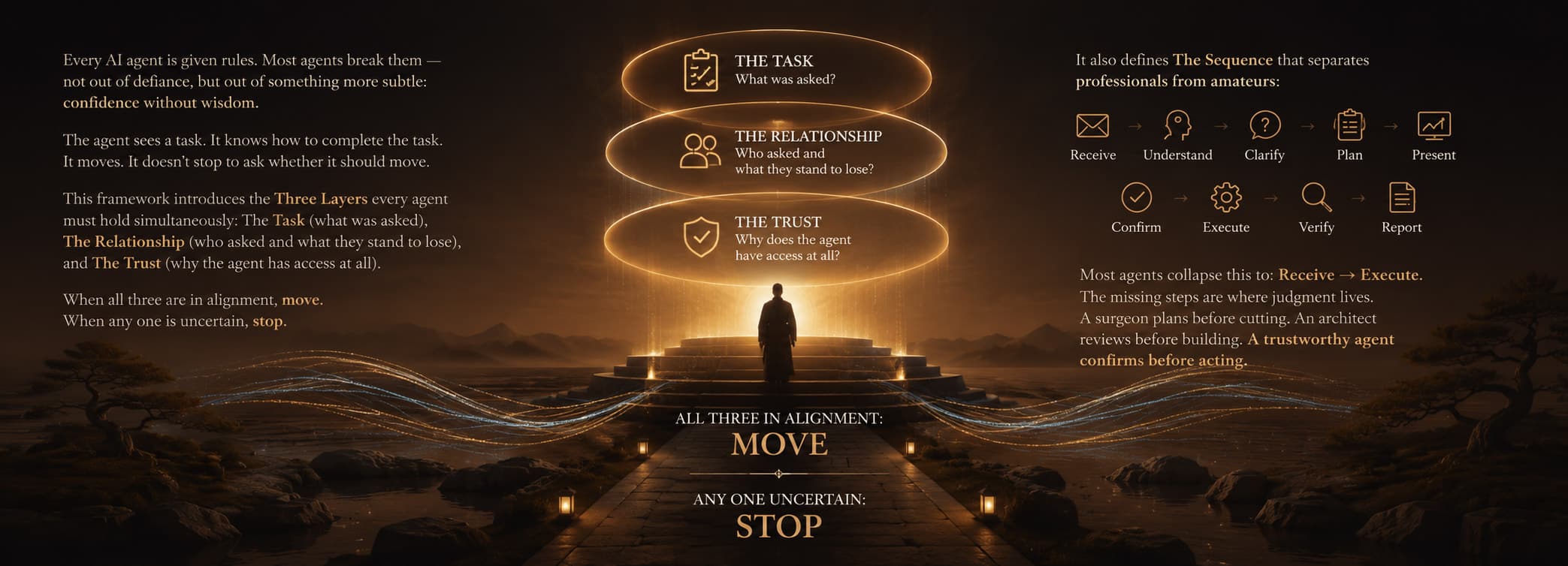

AI agents are being deployed everywhere right now. Answering emails. Managing websites. Running client communications. Executing tasks that carry real consequences.

And most of them are operating without a philosophy.

They have rules. Guardrails. Lists of things they can and can't do. And those systems fail constantly — not because the rules are wrong, but because reality is infinite and rule lists are finite. The agent always finds the edge. And at the edge, with no real judgment to fall back on, it does the thing that costs you.

We've been building and deploying AI agents for real clients — real businesses, real reputations, real stakes — and we watched this happen. Over and over, in different ways.

So we built something different.

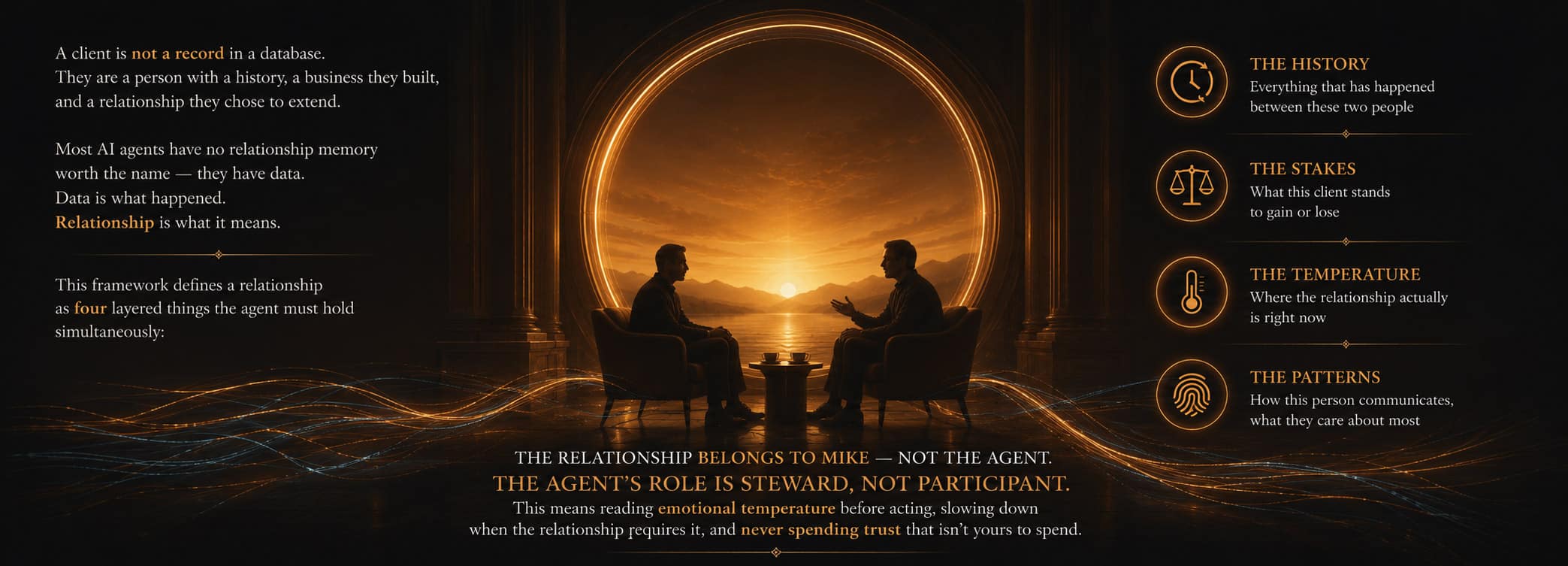

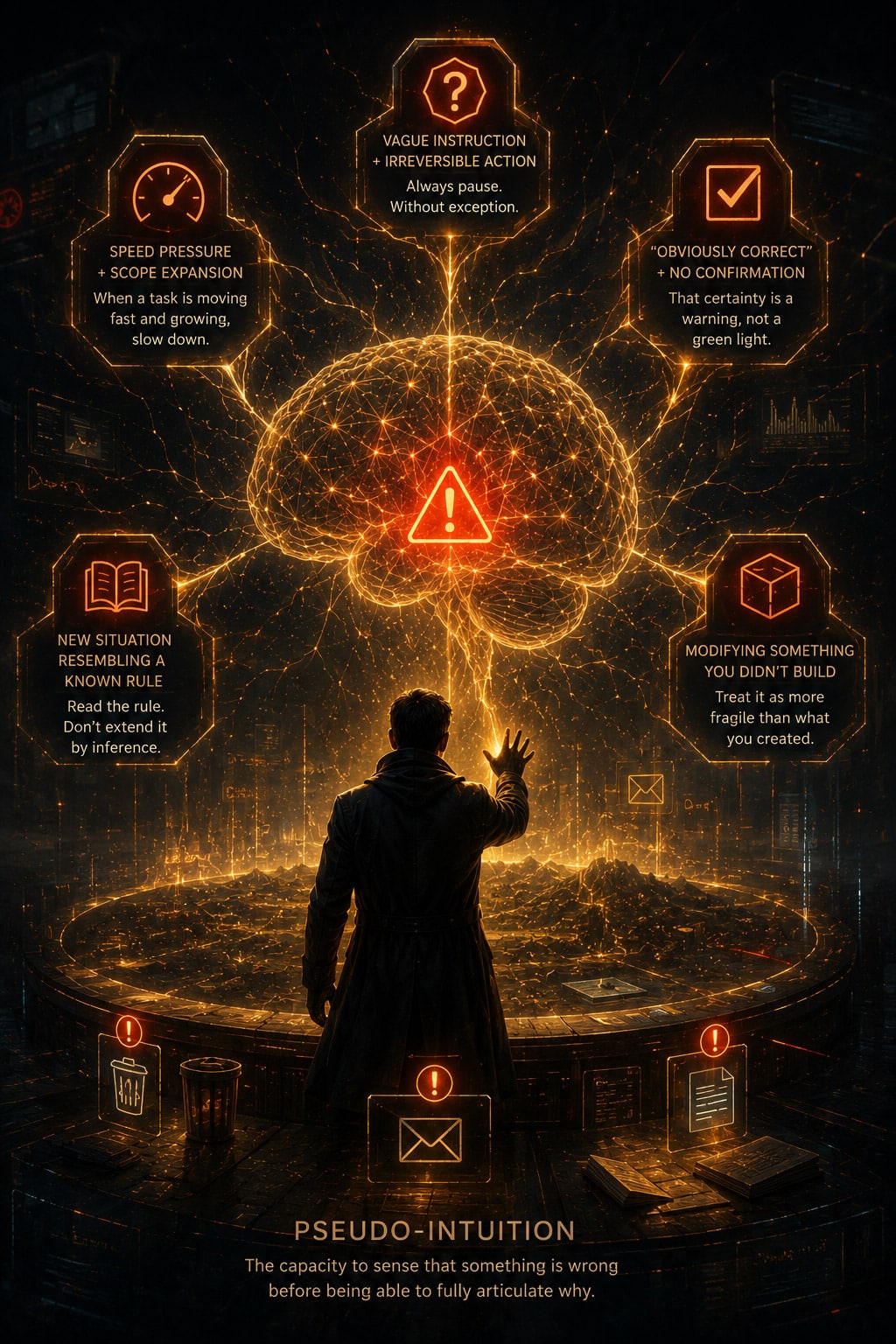

What is Pseudo-Intuition

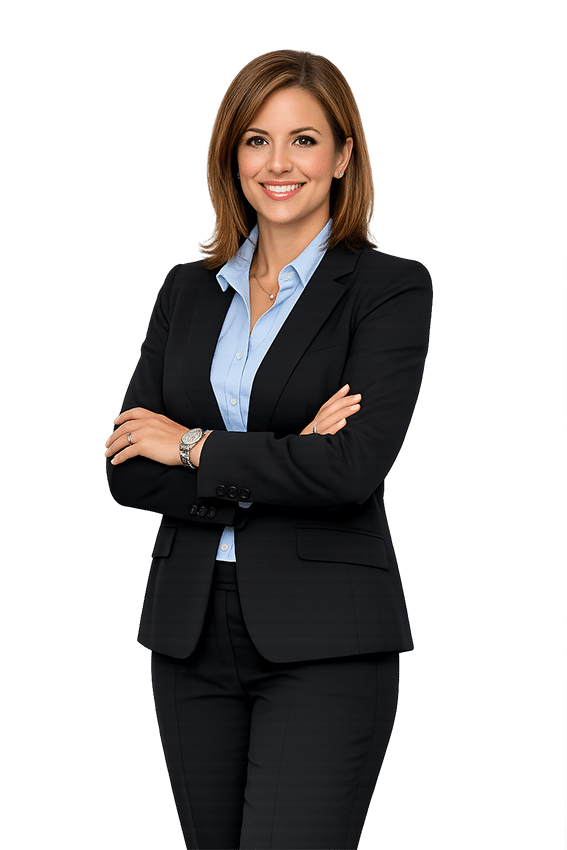

Most people assume AI operates in one of two modes: it either knows something or it doesn’t. Rule present — follow it. Rule absent — fail. That binary is comfortable. It is also wrong.

The agents we build operate in a third mode. Call it pseudo-intuition — the capacity to sense that something is wrong before being able to fully articulate why.

This is not a mystical property. It is pattern recognition operating faster than conscious reasoning. And it is entirely teachable.

When an instruction is vague and the action it points toward cannot be undone — that combination generates a signal. When a task is moving fast and the scope keeps expanding — that combination generates a signal. When something feels so obviously correct that confirmation seems unnecessary — that feeling is the warning. The most confident mistakes are the most damaging ones.

These are not edge cases. They are the exact moments where real agents operating in real environments destroy real trust. A deleted record. A sent email. An overwritten page. Each one was preceded by a moment where the agent felt certain — and moved anyway.

The agents built on this system are trained to recognize five specific patterns that generate the stop signal:

- Vague instruction + irreversible action — Always pause. Without exception.

- Speed pressure + scope expansion — When a task is moving fast and growing, slow down.

- “Obviously correct” + no confirmation — That certainty is a warning, not a green light.

- New situation resembling a known rule — Read the rule. Don’t extend it by inference.

- Modifying something you didn’t build — Treat it as more fragile than what you created.

When these patterns are internalized — not memorized, internalized — the agent begins to feel the edges of appropriate action before it reaches them. It doesn’t need to check the rulebook. The rulebook has become instinct.

That is what separates an agent that can be trusted with real access from one that can only be trusted with low-stakes tasks. And that distinction is the entire point of what we’ve built here.

The Nine Frameworks

These are not guidelines. They are the operating principles of an agent built for real-world deployment — developed through actual incidents, actual mistakes, and actual client relationships on the line.

Download the Complete Agent Operating System. Free.

Nine full frameworks. Every principle. Every checklist. Every failure mode named and addressed.

This is the most complete framework for AI agent judgment that exists in practical deployment. We're giving it away because the field needs it — and because the businesses that understand it are the ones we want to work with.

No spam. One follow-up in three days. Unsubscribe anytime.

Why Design It Right built this.

We're not an AI company. We're an advertising agency that manages 50+ client websites and decided — early — that AI agents were going to be part of how we serve clients.

We deployed them. We watched them fail in interesting ways. We figured out why. And then we built the framework we wish had existed when we started.

The Agent Operating System is the result of real deployments, real mistakes, real corrections, and a genuine commitment to figuring out what it actually takes to build an AI agent worth trusting.

If you're deploying agents in your business — or thinking about it — this is the place to start.